3.5 Central Limit Theorem

In Module 6, we will cover the foundations of statistical tests. However, in order to understand what those tests tell us and how useful they are, it is important to basically look at what allows them to work in the first place. In comes the Central Limit Theorem, one of the most important concepts in all of statistics. We will also look at standard error, as this becomes a crucial concept for the next module!

3.5.1 The sampling distribution of the mean

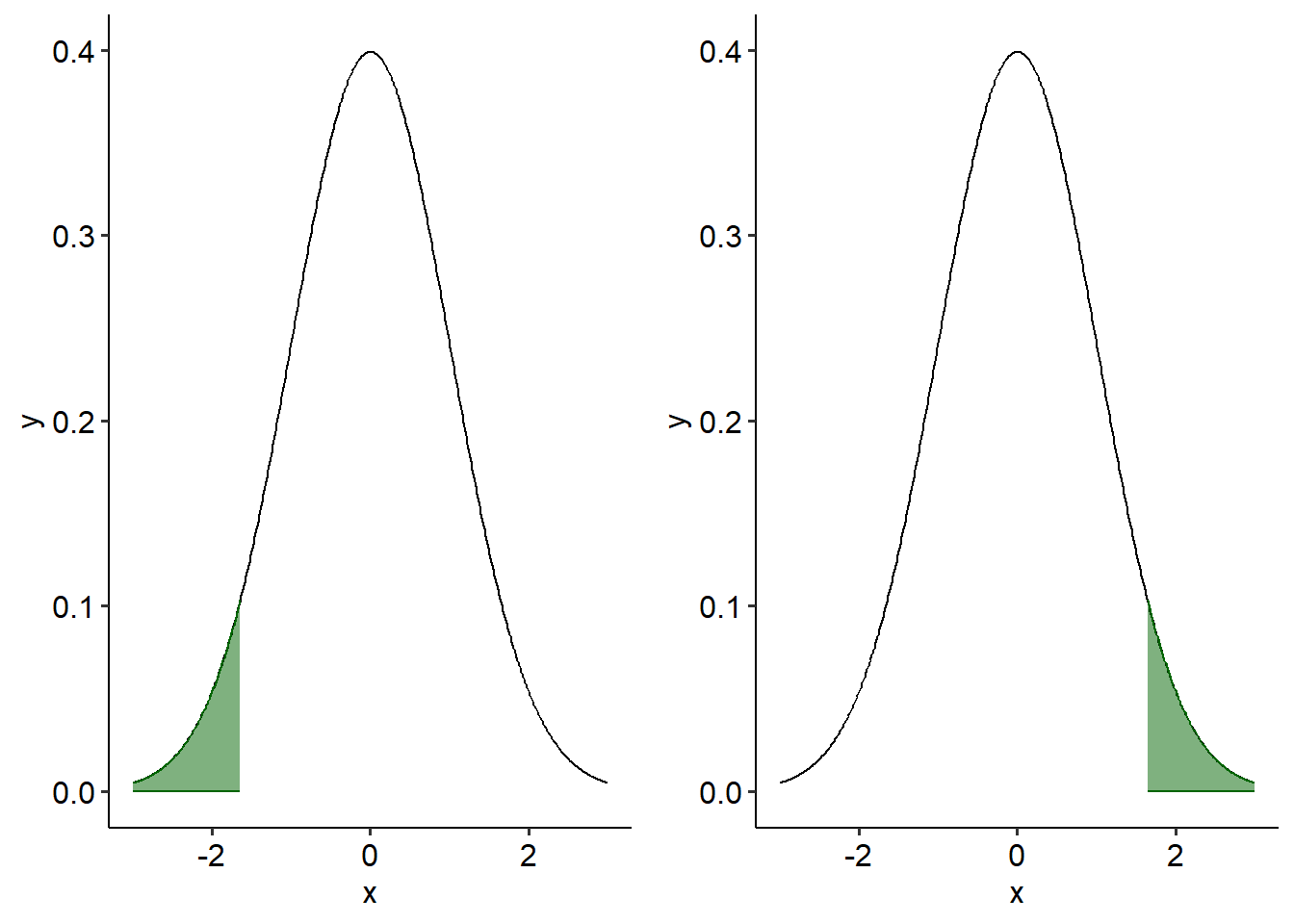

Imagine that I have a population of 100 regular people (shown on the left). I take a sample of 10 people, measure their heights and then calculate the mean height of that one sample. I then repeat this process over and over again, and plot where each sample’s mean falls. Of course, because every sample is slightly different the mean of each sample will be slightly different too due to sampling error. Some sample means will be lower than the true population mean, while some will be higher. Eventually, we might end up with something like the spread on the right:

The spread of these sample means is called the sampling distribution of the mean (SDoTM), shown on the right.

3.5.2 The Central Limit Theorem

The hypothetical height example above demonstrates the Central Limit Theorem (CLT), a fundamental theorem of probability theory. It states that under the right conditions, the sampling distribution of the mean will converge to a normal distribution. This occurs even when the original data are not normally distributed.

The Central Limit Theorem works on the law of large numbers, another fundamental probability theory. The law of large numbers states that given a large enough sample, our estimates of a probability or phenomenon should converge on the true value. For example, consider a regular six-sided die. If the die is fair, each possible outcome or number should have a 1/6 chance of being rolled. Therefore, if we were to roll a single die 100,000 times then we should see that 1 in 6 chance (16.67%) bear out in the data:

The CLT utilises the same principle. If we conduct studies with large enough samples then our estimates of a parameter should converge (or at least get pretty close) to the true population value.

A general rule of thumb for sample sizes is that n > 30 is sufficient even when the population is skewed. In other words, even if a population is heavily skewed on a variable, taking several samples of n > 30 will still show a normally distributed set of sample means. You can see this for yourself in the simulator above - try set sample size to 5, 10 and then 30, and see what happens in the Sampling Distribution tab.

3.5.3 Standard error of the mean

Coming back to the height example above, we can see that the sampling distribution on the right resembles the actual distribution on the left pretty closely. This is a good thing! This gives us a sense of where the population mean (the parameter that we are interested in) might lie. With enough samples, the peak of this sampling distribution of the mean will converge around the population mean. As you can see in our hypothetical example, the peak of the sampling distribution of the mean sits pretty close to the original population mean, meaning our estimate is pretty good.

However, of course, in a real research setting we typically do not take multiple repeated samples like this, and instead just take one. Thanks to the CLT, though, we know that if our sample is large enough, we should still be converging closer to the true mean than we would if we had a small sample. This still allows us to make inferences about the population parameter with just one sample.

The standard error of the mean (standard error; SE) is another measure of variability - this time, it is the spread of sample means across the sampling distribution of the mean. This represents how close our sample mean is to the likely population mean, and therefore is one way of estimating the precision of our effect. If our sampling distribution is wide, our standard error will be large - and that means that we won’t have a very precise estimate of the population mean. However, if we have a small standard error that will mean that our sample mean is likely to be close to the population mean.

Standard error is calculated using the below formula:

\[ SE = \frac{SD}{\sqrt n} \]

Where SD = standard deviation, and n = sample size.

Based on the formula alone, hopefully one critical element is clear: with bigger samples, the standard error decreases - and therefore, the sample mean should be closer to the population mean. This means that with large samples, we should ideally be getting a really good estimate of the population of interest! Conversely, smaller sample sizes (as is common in music research) are unlikely to be good estimates of populations due to the inherently greater amount of error involved. Therefore, we should always be aiming for larger sample sizes wherever possible for statistical analyses.

3.5.4 Simulation

To test this for yourself, try the below sample simulator. You can set what distribution you want to draw from, and choose how many samples and simulations you want to run.

The Population Distribution tab will show you what you are sampling from; the Samples tab is each individual sample and the Sampling Distribution tab shows the distribution of sample means. Try and change the sample size and see how that impacts on the Sampling Distribution.

(You may need to scroll within the app to see the full output.)